Quick segue from my planned content. This weekend we had the opportunity to pick up a collection of nearly 300 records for $100. We checked just enough to make sure we weren’t getting a half ton of vinyl chips and brought back boxes of LPs.

Cataloging this haul created a nice opportunity: build a multimodal cataloging agent that interprets album sleeve images, enriches them with database lookups, estimates value, and logs the result into a shared inventory sheet.

The two primary goals for the system were a) catalog and classify the finds and b) get a basic valuation with a flag for anything that might be a hidden gem and warrant deeper inspection. The classification system was simple: sell, keep for listening, or keep for use in arts and crafts.

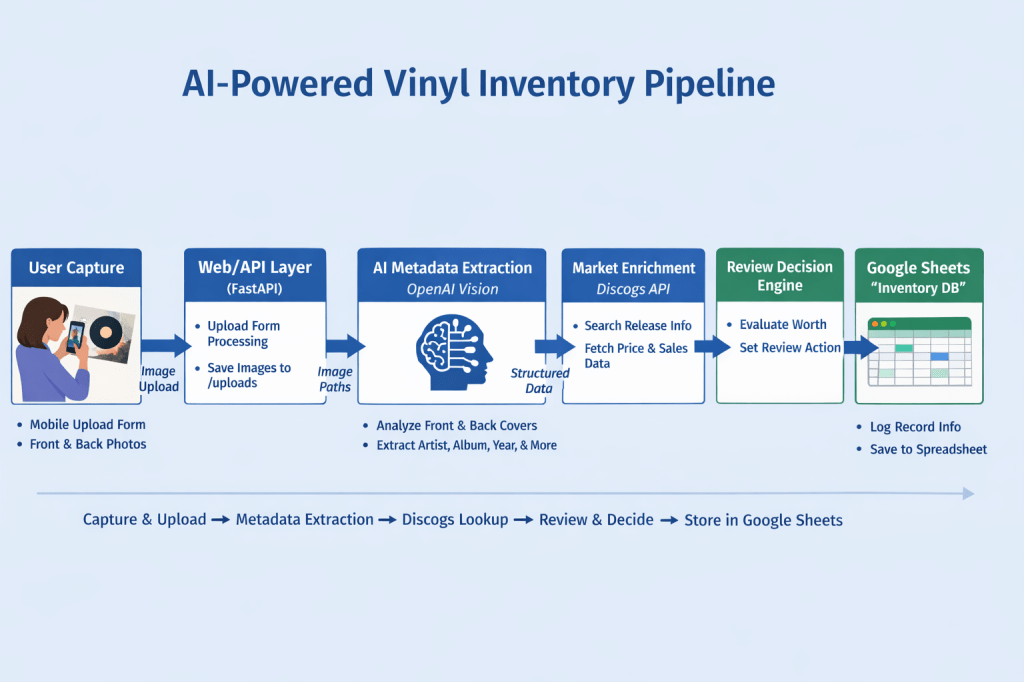

Here’s a visual of the system flow:

I hosted the work on a Digital Ocean droplet, same place I’ve done the backend work for my iOS app and web app. Almost all of the coding is Python and HTML. Here’s how the agent works:

- User navigates to a web page, submits pictures of the front and back covers of the album.

- The images are stored on the droplet.

- API calls are made to OpenAI Vision to extract information about the album from the images.

- An API call is made to Discogs to find the Discogs ID for the album. If a barcode was found via OpenAI Vision, the barcode is prioritized over artist and title data.

- A second Discogs API call pulls information about the number of people trying to sell this album, as well as the current minimum selling price.

- The agent reviews the available data and determines whether to recommend further investigation of the album’s potential value.

- An API call to Google Sheets writes a new record in our cataloging sheet.

- The web page provides a report to the user. If the agent recommends investigating further, it provides a reason and suggested actions.

Agentic Behavior

Besides making progress on my cataloging task, I enjoyed this bit of work because it’s a succinct yet great example of what agents should do. The agent accepts external input, extracts meaning from unstructured data, and makes decisions. It uses tools and takes real-world actions.

That last bit is very key. Generative AI is a fantastic tool, but it’s largely a passive advisor. It’s an extremely sophisticated advisor with incredible resources, but the next actions are almost entirely up to the user.

An agent, on the other hand, should have agency. It should perform actions that produce benefits such as saving time, reducing likelihood of error, and increasing usability of resources. The more agents are able work safely yet autonomously, the greater the benefit will be.

That’s the last note I’ll mention about this fun experiment. I’m quite happy to let my agent write to my Google Sheets inventory of albums, but it won’t have autonomy over my bank account anytime soon. As numerous OpenClaw stories demonstrate, guardrails should be sunk firmly into the foundation of agent design. That’s probably a good topic for an entire series of posts…

Leave a comment